Introducing Scaling Mode for TabPFN: Foundation Models for Tabular Data on Millions of Rows

Over the past 2 years, TabPFN has gone from a research prototype for tiny datasets to a production‑ready foundation model powering hundreds of tabular ML applications.

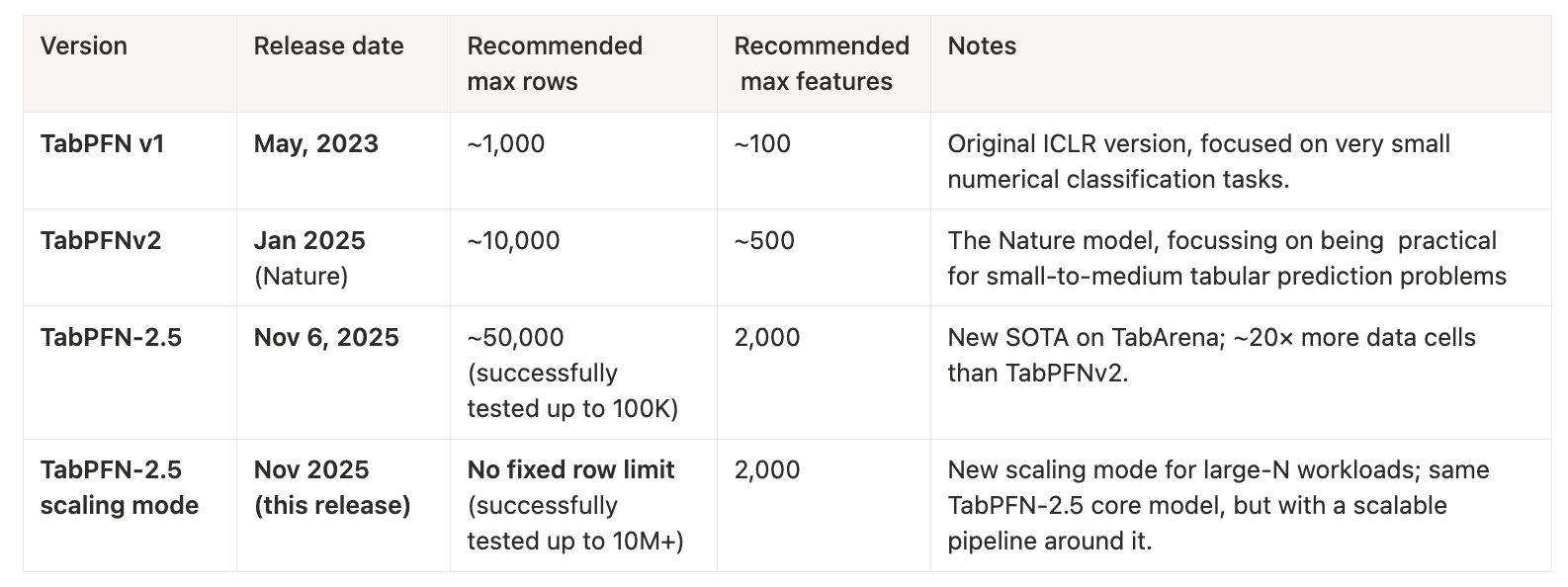

- TabPFN v1 started with an idea: can we use a transformer to solve tabular classification problems in a single forward pass? But it was limited to tiny datasets up to 1,000 rows and 100 features.

- TabPFNv2, published in Nature in January 2025, scaled that idea to mainstream, practical, “small‑to‑medium” tabular prediction, with strong performance up to 10,000 samples and 500 features, allowing thousands of use cases since.

- TabPFN‑2.5, released on November 6, 2025, pushed another big step, yielding state-of-the-art performance on all of TabArena, up to 100,000 rows and 2,000 features (even though we had only aimed for 50,000 rows).

Today, we’re excited to introduce Scaling Mode: a new operating mode that effectively lifts the remaining constraints on dataset size.

With TabPFN-2.5 Scaling Mode, we scale seamlessly to orders of magnitude larger datasets and got strong performance on datasets up to 10 million data points, that is:

- 100× more rows than what we tackled with TabPFN‑2.5 just three weeks ago

- 1,000× more rows than the original TabPFNv2 release in January

…and with no fixed upper limit on the number of rows beyond your hardware budget.

Why we built Scaling Mode

TabPFN’s original mission was to yield push-button SOTA performance for small tabular ML.

By amortizing learning into a single large pretraining phase, TabPFN lets you:

- skip hyperparameter tuning

- avoid task‑specific training

- get strong predictions in one forward pass

With TabPFNv2, our recommended size limit was “up to 10K samples and 500 features.”

But many teams quickly hit the same question:

“Can I also use TabPFN on my large dataset?”

With TabPFN‑2.5, we achieved SOTA performance on TabArena (which has up to 100K rows), but still, many production workloads live well beyond that regime.

Scaling Mode is our answer: a way to keep the TabPFN foundation model and user experience, while removing the size constraints and enabling datasets with millions of rows.

What exactly is TabPFN-2.5 Scaling Mode?

Conceptually, Scaling Mode is a new operating mode on top of the TabPFN-2.5 model:

- Like “Thinking Mode” in LLMs, it wraps around a core model, keeping the same 2,000‑feature limit as TabPFN‑2.5.

- It removes the fixed row limit: the system is designed to work with arbitrarily large training sets, constrained only by your compute and memory.

- In our internal tests, it easily scales up to 10 million rows, suggesting that we can go much higher yet.

The Scaling Mode introduces a new pipeline around the model that is tailored for large‑N workloads. The key message is simple: You can now bring arbitrary-sized datasets to TabPFN.

Results for 1M to 10M Rows

We benchmarked Scaling Mode on our internal dataset collection, ranging from 1M to 10M rows and covering applications from industry and science. We compared the three standard gradient boosting libraries CatBoost, XGBoost, LightGBM, and TabPFN-2.5 (with a naive approach that subsamples 50K rows).

The results below show that Scaling Mode enables TabPFN-2.5 to continue improving performance with more data: it scales dramatically better than TabPFN-2.5 does with a naive subsampling approach, and there is no evidence of the gap to gradient boosting shrinking as we scale up!

While the plot above shows scores normalized across datasets, the following plot on the largest dataset we tested also shows that ROC AUC continues to improve strongly with more data.

Timeline & scaling summary

To put this new mode in context, here’s the evolution of TabPFN’s recommended operating regime over time showing the dramatic progress in this new field.

If you look at this purely in terms of rows:

- v1 → v2: 10× more rows

- v2 → 2.5: 5-10× more rows

- 2.5 → scaling mode (tested): ≥200× more rows (50K → 10M)

And if you compare TabPFNv2 to TabPFN-2.5 Scaling Mode: From ~10K rows to 10M rows: a 1,000× increase in dataset size in less than a year.